So Recursive It Hurts

Create static websites with Hugo, Docker, and Amazon S3

Hugo is the new kid on the block in the world of static site generators. Docker has set the DevOps world ablaze by popularizing container technology. Naturally, I’ll be perpetuating the hype train for both with my blog. Let’s run Hugo in a Docker container, generate my website, and host it using Amazon S3.

Why Hugo?

If I want to host a blog using a platform like Wordpress, I need to provision, maintain, and (most importantly) pay for a LAMP stack just to get started. Don’t get me wrong, I like Wordpress and I’ve hosted sites with it in the past. It comes with a rich set of features like post scheduling, WYSYWIG editors, extensive plugins, and so on. However, for my personal blog that’s entirely too much work. Static site generators like Hugo follow a different model. I download a website template, write a post in Markdown, then Hugo renders my content into a full HTML. Now I can host my site anywhere and all I need to worry about are bandwidth fees.

Hugo comes with several pre-built binaries that allow you to run it out of the box on most OS’s. Sadly, extra features like syntax highlighting requires installing additional dependencies to get up and running. I’m lazy, so memorizing OS-specific commands for a project is an immediate no-go. Which version of Python do I install? Which packages do I download with pip? Let’s use Docker containers to run Hugo with batteries included.

Docker to the rescue

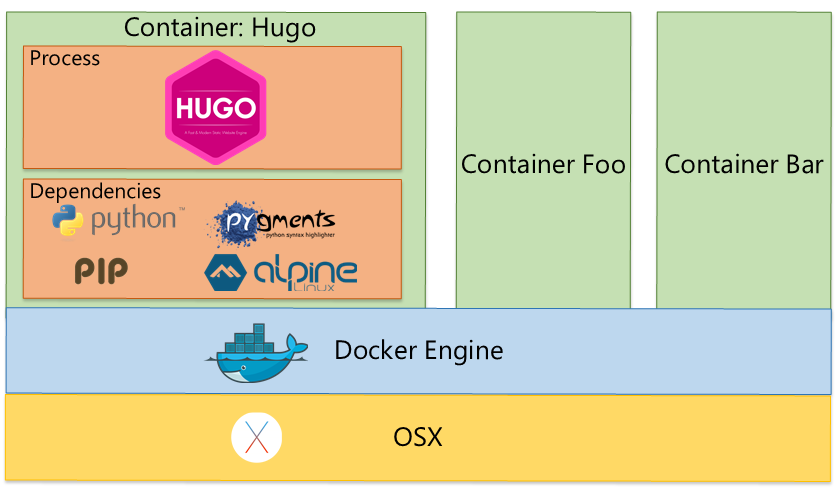

Using Docker, we can create a tiny sandboxed environment—a container—that bundles everything we need to run Hugo. Containers can include almost anything, such as a filesystem, OS binaries, the Hugo binary, and additional dependencies for extra features. This is basically static linking on steroids. I no longer have to remember custom install instructions per machine to get the full Hugo experience. I just download the Docker image and I’m ready to go. Note that Docker is supported by several operating systems.

Run Hugo with Docker to build our site

Images for containers can be shared, which means that once a Hugo image is defined, anyone with Docker installed can download and run it. Let’s grab Hugo from the public image I uploaded to Docker Hub.

$ docker pull alani/hugo

Now that we have an image for Hugo, we can run a container with it and use Hugo to create a new site.

$ mkdir ahmedalani.com

$ cd ahmedalani.com

$ docker run --rm -v `pwd`:/src alani/hugo new site .

Breaking down the command quickly:

docker run --rm- Run a temporary container. This means it will not exist after we exit.-v `pwd`:/src- Mount the /src volume in the container to the current directory on my machine, /ahmedalani.com. The /src volume is the working directory in the container.alani/hugo new site .- Run the container using the alani/hugo image we just downloaded. Because the container represents the Hugo process, pass it additional parameters new site . This will generate a Hugo site in the container’s working directory, and subsequently, our host machine’s directory.

At this point if we ls, our directory should contain a Hugo site template.

- ahmedalani.com

----- archetypes\

----- config.yaml

----- content\

--------- posts\

----- data\

----- layouts\

----- static\

----- ...

Time to create out first post. Let’s also make running the container easier by using the alias command.

$ alias hugo="docker run -it --rm -v `pwd`:/src -p 1313:1313 alani/hugo"

$ hugo new post/so-recursive-it-hurts.md

/src/content/post/so-recursive-it-hurts.md created

After adding some content (like this blog post), we can preview the blog locally using Hugo’s built-in webserver.

$ hugo server -w --baseUrl="http://localhost:1313" --bind=0.0.0.0

0 of 0 draft rendered

0 future content

1 pages created

0 paginator pages created

0 tags created

0 categories created

in 34 ms

Watching for changes in /src/{data,content,layouts,static,themes/hugo-incorporated}

Serving pages from /src/public

Web Server is available at http://0.0.0.0:1313/

Press Ctrl+C to stop

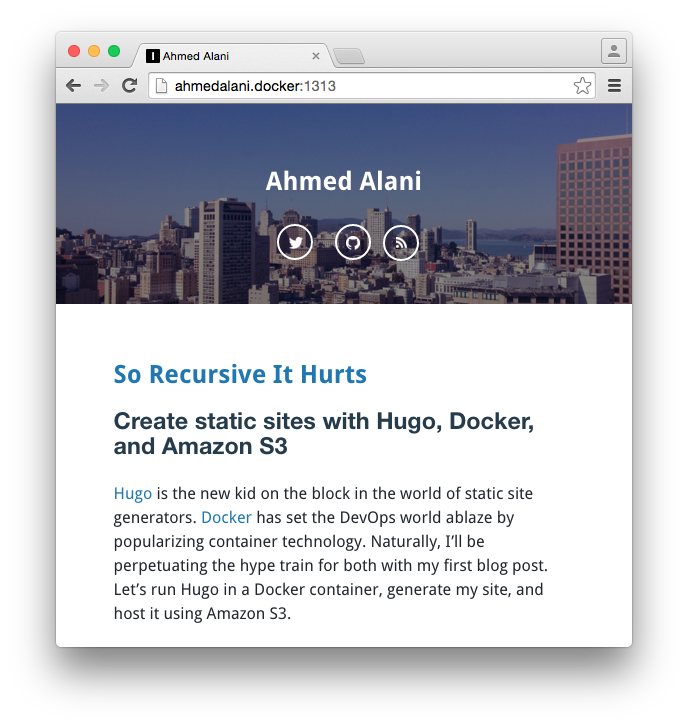

We’re up! Hugo will launch a web server in the container and serve up the site on port 1313. It will also watch the source code in the /src volume and regenerate + live reload the site if we make any edits.

Quick side notes:

- In the

aliascommand, I bound the container’s port 1313 to my host port 1313, otherwise I wouldn’t have been able to access the site from my browser. Ctrl+Cwill cause the Hugo web server to stop and this will exit our container.

Hosting on Amazon S3

The final step is to host our static site somewhere. This is fairly trivial to do with Amazon S3 and gives us the benefit of Amazon’s reliability and CDN. Follow this guide to set up an S3 bucket with correct permissions.

We can use the handy s3_website tool to synchronize files with our S3 bucket when we make an update. And because I’ve discovered a shiny new hammer, I’ve created a Docker image that encapsulates s3_website and its dependencies as well.

Pull down the image.

$ docker pull alani/s3website

Create the S3 config and add your bucket information, including AWS credentials. Don’t check s3config.yml into source control.

$ alias s3website="docker run -i -t --rm -v `pwd`:/src alani/s3website"

$ s3website cfg create # Creates s3config.yml

$ open s3config.yml # Add your S3 information & credentials. Don't check in

It’s time to generate the final set of files and upload to S3.

$ hugo --baseUrl="http://ahmedalani.com"

0 of 0 drafts rendered

0 future content

1 pages created

0 paginator pages created

0 tags created

0 categories created

in 107 ms

$ s3website push --site public

[info] Deploying public/* to ahmedalani.com

[succ] Created index.xml (application/rss+xml)

...

[info] Summary: Created 39 files. Transferred 1.6 MB, 2.1 MB/s.

[info] Successfully pushed the website to http://ahmedalani.com.s3-website-us-east-1.amazonaws.com

Final Thoughts

I wrote this article to see if Docker can serve as a useful wrapper for everyday tools and so far I’m pretty happy using it across multiple machines. As you’ve probably deduced, dockerizing tools can get out of control if we start creating bloated containers for every single simple process. Try to build and use lean images and, at the end of the day, remember that this is just another tool in your belt.